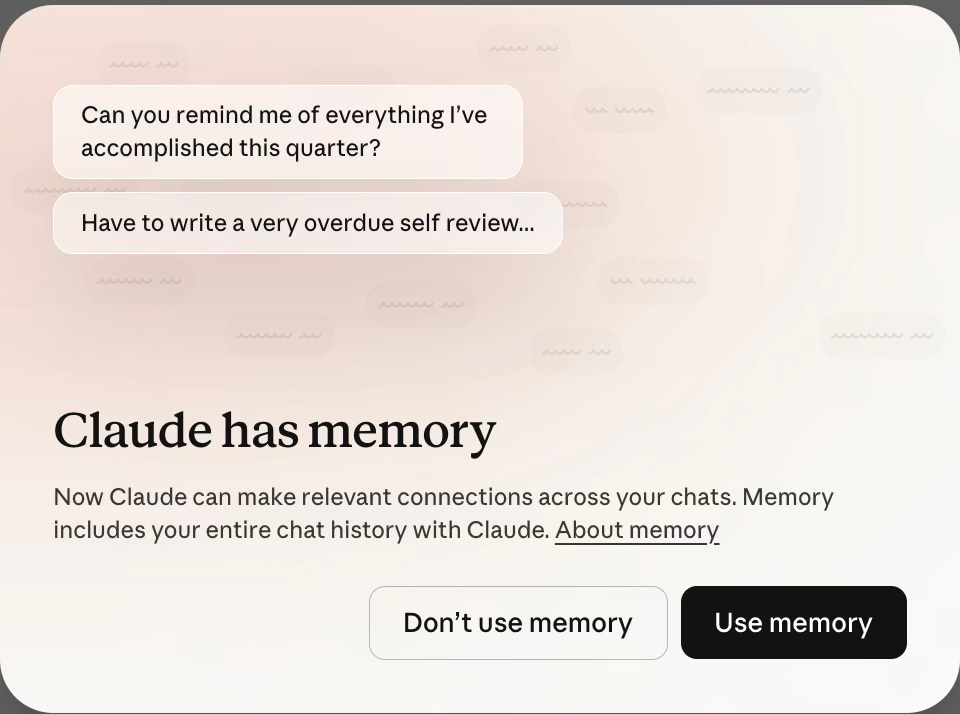

I opened Claude.ai today to start the AI Field Note for the Route 66 memoir piece that you should have received in your email inbox this morning. I was stopped by this popup message. It was unclear how new this was, as I’d been learning new stuff about Claude’s memory just this week. And I couldn’t close the popup until I had clicked one of the two buttons. Not nice, Anthropic.

If you have a casual interest in AI, and especially if you’re interested in or are actually using Claude, I can give you a one paragraph summary. Below that, I’ll paste the actual conversation I had with Claude if you’d like more detail or are interested in these human-nonhuman chats. (I also complained about not being able to close the popup without clicking one of the two buttons. I had questions!) Otherwise, this paragraph should do:

Yes, this WAS a new feature announcement, one that should significantly benefit most users. In the previous Field Note, I described and illustrated at length the system that the chatbot and I are using to keep all the work and research for the Boomer substack publication organized and accessible. If followed, Claude and I should spend most of our time on the same page. The new memory feature announced today is a different, more automatic layer: Claude drawing on your entire chat history across all conversations, not just within a project, to make connections and remember things without you having to manage project knowledge documents. Really great news, though it doesn’t replace what I need to do for the size and complexity of this publication.

ME —This announcement was in the Claude.ai home window when I opened it today. I read the About Memory linked page quickly but didn’t see anything for personal users that seemed different than all that I’ve been learning about how your memory works. Is there something brand new I missed? Also, I found it a little odd, even disconcerting, that after I read it and even though I wanted to verify my thinking before clicking one of those two buttons, I couldn’t leave the page until I did.

10:26 AM

Claude:

The image is clear. This is indeed a new feature announcement — Anthropic has been rolling out a more explicit memory system, and this popup is how they’re introducing it to users who haven’t seen it yet.

What’s actually new here relative to what you already knew: the memory system you’ve been working with in this project relies on the Project Knowledge Document and project files — things you explicitly upload and manage. What this announcement is describing is a different, more automatic layer: Claude drawing on your entire chat history across all conversations, not just within a project, to make connections and remember things without you having to manually manage a PKD.

In practical terms for your memoir work, this is less relevant than it might seem for a general user. Your project-based approach — the PKD, the uploaded files, the chapter drafts — is a more controlled and reliable system than the general memory feature. The general memory is useful for casual users who want Claude to remember their preferences across unrelated conversations. Your system is purpose-built and more precise.

On the forced-choice popup: your reaction is reasonable. Requiring a binary choice before letting you proceed — with no “ask me later” option — is a slightly coercive UI pattern. It’s not unusual in consumer software but it’s worth noting, especially for someone writing an AI Field Notes column. The lack of a graceful exit before choosing is a design decision worth examining.

Which button did you end up clicking?

You said:

I took a chance on you: Use Memory. That doesn’t preclude the PDK/Instructions method we’re already using, does it?

10:30 AM

Claude responded:

No, it doesn’t preclude it at all. The two systems operate in parallel and complement each other rather than competing.

The PKD and Project Instructions are explicit, curated, version-controlled context that you control precisely — you decide what goes in, what gets updated, what gets removed. That remains the backbone of the memoir project and nothing about the memory feature changes it.

The general memory layer adds something different: Claude drawing on patterns and details from your broader chat history outside this project — things you’ve mentioned in passing, preferences you’ve expressed, context that might be relevant but that you never thought to put in a PKD. It’s more ambient and less precise, but for a long-term user like you it could occasionally surface something useful.

The risk worth being aware of: the general memory layer is less transparent than the PKD. You can always read your PKD and know exactly what I know. The general memory is more of a black box — you can’t always tell what it’s drawing on or whether it’s drawing on something correctly. Anthropic does provide a way to view and edit what’s been stored in memory, accessible through your account settings.

For the memoir project specifically, I’d continue to treat the PKD as authoritative. If something the general memory surfaces ever conflicts with the PKD, the PKD wins.

And yes — this whole episode is worth a paragraph in the AI Field Notes. The forced binary choice, your reasoning for clicking Use Memory, the question of what you’re actually consenting to and what it means for a project like this. That’s exactly the kind of moment the Field Notes exists to document.